These four essays are about systematic risk in the U.S. in 2026. I cover the following four risk categories:

- The U.S. Federal Debt

- Artificial intelligence

- Climate change

- Donald Trump and the MAGA movement

This second essay is on artificial intelligence (AI).

The Second Horseman – Artificial Intelligence (AI)

In her book Empire of AI, author Karen Hao takes a deep dive into the AI industry by profiling one of its leading companies, OpenAI. OpenAI and its competitors are engaged in a frantic race toward achieving artificial general intelligence, which is described – by the people who work in the industry – as the Manhattan Project. Half of the people make the comparison in a positive way, seeing the research as a benign harnessing of the physics of technology which will yield massive benefits for mankind. The other half make the comparison with a sense of foreboding, likening the research to Oppenheimer’s quest to create the most destructive weapon on earth. Therein lies the risk of AI. It has many potential benefits in the fields of healthcare, climate science, business, and education. But it is also tool that can be used to alter, and potentially destroy, the underlying fabric of human society.

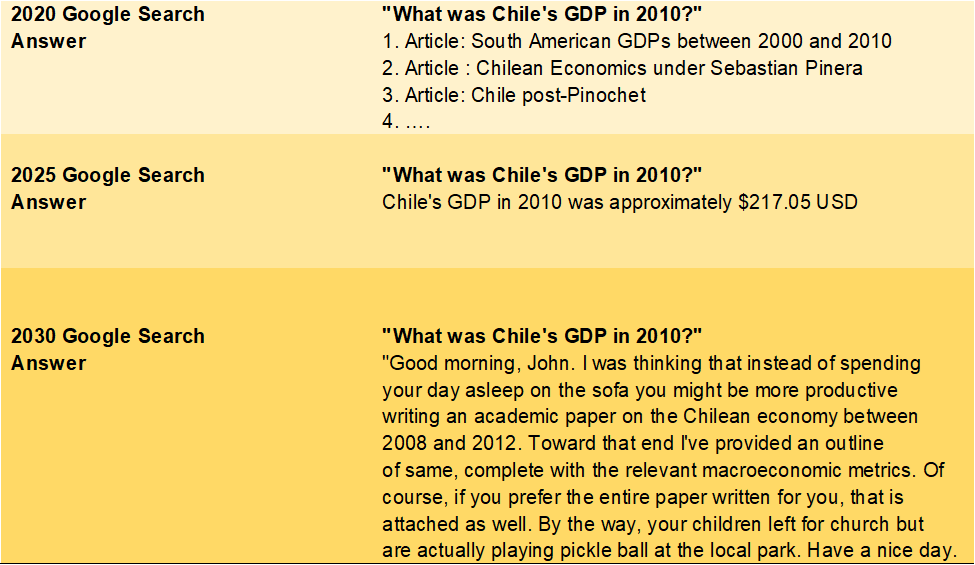

There is a difference between the terms artificial intelligence (AI) and artificial general intelligence (AGI). AI is the computer tool that we already have. If you conducted a search on Google a few years ago for, say, the annual GDP of Chile in the year 2010, Google would provide several websites that you could access to further research your answer. Now AI searches through the websites itself and picks out the answer for you. AI has advanced well beyond simple search tasks to become a sophisticated tool that can, if you want, write your term paper on the causes of the Civil War, the mechanics of photosynthesis, or the origins of the Spanish flu.

To reach a point where AI could write your term paper, the computer needed to first learn language and math skills, and then be taught to search for trends in massive amounts of data to understand how not just to regurgitate relevant sentences, but to make connections between different data points that represents what humans call analysis. To accomplish this, the companies researching the technology fed the AI platform nearly the entire contents of the internet – all the literature, journals, magazines, articles, comments – anything that was written that they could access online. The platform then did what young children do – it “heard” and “saw” the language and in the process learned spelling, sentence structure, how to speak – the skills you developed as a preschooler.

When OpenAI launched ChatGPT in November 2022, the world realized that computers could now talk on their own. Still, all ChatGPT represents is the ability to comb through massive amounts of articles, books, data, etc. and formulate a cogent, well-written summary of that research. The evolution of a Google search over the 2020s can be illustrated as follows:

The current AI dilemma was presaged with astonishing accuracy by the movie 2001: A Space Odyssey, written by Arthur Clarke and directed by Stanley Kubrick in 1968, ten years before we even had 4 KB desktop computers. Near the end of the film, the main astronaut, Dave, is trying to regain access to the mother ship from his space pod. The mother ship is operated by a computer named Hal. Hal controls the ship’s functions and throughout the movie Hal obeys the astronaut’s verbal commands, similar to how we might order Alexa to turn on the lights, start the coffee machine, etc. Prior to the scene copied below, Hal had read the lips of the astronauts as they thought they were having a private conversation about disconnecting Hal. Later as Dave tries to reconnect with the ship, they have the following encounter: https://www.youtube.com/watch?v=ARJ8cAGm6JE

Dave: Open the pod bay doors Hal.

Hal: I’m sorry Dave, I’m afraid I can’t do that.

Dave: What’s the problem?

Hal: I think you know the problem just as well as I do.

Dave: What are you talking about?

Hal: This mission is too important for me to allow you to jeopardize it.

Dave: I don’t know what you’re talking about Hal.

Hal: I know that you and Frank were trying to disconnect me and I’m afraid that’s something I cannot allow to happen.

In 1968 this movie was a science fiction masterpiece. In 2026 it is on the verge of becoming reality. Hal the computer represents what the industry calls artificial general intelligence (AGI), the next step beyond AI. AGI is AI that has the ability of human thought, the ability to reason, and, some would argue, Hal’s self-awareness. Actually, AGI would more appropriately be described as human cognition without humanity’s biological limitations. It will be a “computer” with the ability to assimilate massive amounts of data and information at lightning speed and provide solutions to the world’s most stubborn dilemmas. Is there a cure for cancer? If there exists in nature a compound that could be created to cure any disease, AGI will find it. Can we solve the climate crisis? Yep, no problem. Can we stop the Atlantic Meridional Overturning Circulation from breaking down? Sure, just follow these directions. How about a self-sustaining source of clean energy? Absolutely we can, here’s what you should do.

The U.S. companies currently pursuing AGI are OpenAI, Google, Microsoft, Anthropic, Meta, IBM, Apple, Amazon, xAI, and a host of smaller operators. Chinese companies, many of which are government funded, are also at, or beyond, the curve of U.S. cutting edge AGI research (Deepseek, Baidu, Tencent, Huawei, Alibaba, Baichuan, etc.). The leaders of these companies justify the financial, environmental, and social costs of their research by citing the utopian discoveries mentioned above. These utopian solutions are very much theoretical and most likely years in the future (despite what industry leaders currently promise). The costs, however, are not theoretical and are being paid right now. None of the costs are yet a major discussion in the U.S. since they are largely being paid by underdeveloped and developing economies. However, the costs are massive, they constitute only one area of systematic risk, and they’re coming to a neighborhood near you.

Karen Hao’s book outlines the costs that societies around the world are paying for the tech companies to achieve AGI. I recommend reading it. The two areas of extreme risk are in labor and power.

Labor

For both AI and AGI to work, the computer (when I use the word ‘computer’ think of Hal seconds after its birth, or, more appropriately, assembly, prior to being programmed and fed data). Hal knows nothing, but has a massive number of memory chips that have the ability to record data. The computer needs to learn language, grammar, diction, social norms, current events, and much, much more. The first step in this process is to feed the computer as much written language as can be found. By scanning this voluminous data, it learns words, spelling, nouns, verbs, adverbs, adjectives, subjects, objects, predicate nominatives, gerunds, gerundives, etc. Hal then must learn everything else – the seven continents, the five oceans, the solar system’s planets, etc. Everything you learned as a baby, a child, an adolescent, a teenager, and adult – the computer has to know this. Then it must learn what all the other 8 billion people on the planet have learned. The computer has to identify faces. It must tell the difference between a black person, a white person, and a Hispanic person. Male from female. And so on. This information download not only teaches Hal what we know, but it also gives him the potential to use what is known to discover what is unknown.

To accomplish this the tech companies subcontract other companies to go into mostly poor countries that have a relatively high number of reasonably educated people (Venezuela, Chile, Kenya are examples) and hire people to appropriate content from anywhere it can be found (books, journals, movies, Reddit, Instagram, Facebook, the dark web, etc.), screen as much as possible, and feed it to the computer. The computer scans all this stuff and learns the language arts. Think of everything you have ever read, seen, or heard being poured into a bucket. Then we’ll pour everything anybody else has ever read, seen, or heard into a bucket. It will need to be a big bucket with some very big, powerful memory chips.

But there’s a problem. Computers don’t have morals. They don’t have empathy or any sense of propriety. Anything that is fed into it is content that can be analyzed and regurgitated. Now think of the internet. Think of the volume of lies, conspiracy theories, and utter nonsense that abounds on the internet. Think of the last time you scrolled through Facebook or Instagram and saw a cat dressed in a McDonald’s uniform flipping hamburgers in the kitchen. You laughed because you knew it was an AI cartoon. Hal, without further instruction, may think that McDonald’s hires cats to work in its kitchens. The computer has no way of distinguishing the truth of the statement “one plus one equals two” from the statement “vaccines are poisonous and will kill all human beings.”

But wait. It gets worse. Far, far worse. The internet (and yes, the tech companies have hoovered up virtually all content from the web to feed their computers), has material that is way more dangerous than the delusions that contaminate RFK, Jr.’s brain. Think of the high school sophomore who decides to have ChatGPT write his biology term paper. The assigned topic is human reproductive biology. He sits down and prompts his AI tool to write a paper on “how humans reproduce.” Things can get messy. Fast.

There is no way that OpenAI, Microsoft, IBM and their brethren can screen out all the bad stuff. Well, actually there is a way, but it proved to be too slow and expensive. These companies are in a race with each other and with the Chinese companies. The company that develops a functioning AGI wins. The winner is not going to be the company that takes a slow, cautious approach.

Having said that, the worst of what’s on the net still must be eliminated from the computer’s diet. Humans are capable of unspeakable, heinous depravities. Most of us are not, but those who are like to share it, mostly on the dark web, but also to some extent on easily accessible sites. Torture, sexual violence, porn, and most importantly, child molestation, exist in large volumes on the internet. If the tech companies just hit “Copy” on the entire web, then that stuff will most certainly end up somewhere in the high school kid’s term paper. The tech companies can get sued for that. So they took that part of the screening process somewhat seriously. Not too seriously. But a little seriously.

The tech companies assigned the task of accumulating and screening the internet’s content to subcontractors around the world. The subcontracted companies are independent of the big tech contractors. The subcontractors, in turn, went to mostly labor-troubled countries that have educated populations to, specifically, weed out the child porn, the rape, the torture, etc. from the content that will be fed into the AGI platforms. The subcontractor employees literally spend 8-10 hours per day reading and watching the worst of what is on the web to accomplish this task. The effect on their mental health ranges from the negative to the catastrophic. What they went through, and continue to go through, is the mental equivalent of Morgan Spurlock’s experiment in the documentary film “Supersize Me” when he ate McDonald’s supersized meals exclusively for a month (resulting in significant damage to his liver and other organs). Some of these screeners last a few days, some a few weeks, some a few months. None have long tenures.

These screeners are hired with usually very attractive hourly salaries (for the countries they live in) which get progressively less attractive the longer they work, mostly because they get understandably less productive the more they are exposed to humanity’s heart of darkness. Once they have had enough, their productivity becomes untenable, and they are let go. No healthcare. No severance.

The tech companies distance themselves from the labor abuse by saying the equivalent of “We’re not doing this, the subcontractors are; if we only knew this was happening!” Then they move on to another subcontractor which does the same thing. At a 30,000-foot level, the tech companies congratulate themselves for making herculean efforts to prevent torture, rape, and child porn from leaking into the nation’s high school term papers. In reality, they are doing a very much imperfect job at screening out the contaminating material and they are hurting thousands of poorly paid workers in the process.

Natural resources

The process described above – the feeding and teaching a computer to attain human cognition requires significant natural resources. The AI industry is building more and more data centers to house the hardware that drives the baby Hals out there. It takes an almost unimaginable amount of electrical power to run them, and an unimaginable amount of water to cool them down. The closer the companies get to achieving AGI, the harder it is getting to hide the natural resource consumption from the general public.

The tech companies first built the centers in remote parts of the United States and the developing world. As power demand grows, data centers will need to be built almost everywhere, or at least anywhere there is an efficient access to electricity and water. According to the research site Cleanview, there were 577 operating data centers in the U.S. as of February 2026 which require 14,187 megawatts of power. There are just under 700 more planned for construction, most of which are much larger that will require 176,679 megawatts. Depending on the source, the nation’s aggregate power demand from power centers will reach somewhere between 150,000 to 190,000 megawatts over the next seven to ten years. By comparison, a city of 1.0 million people requires about 1,000 to 1,500 megawatts.

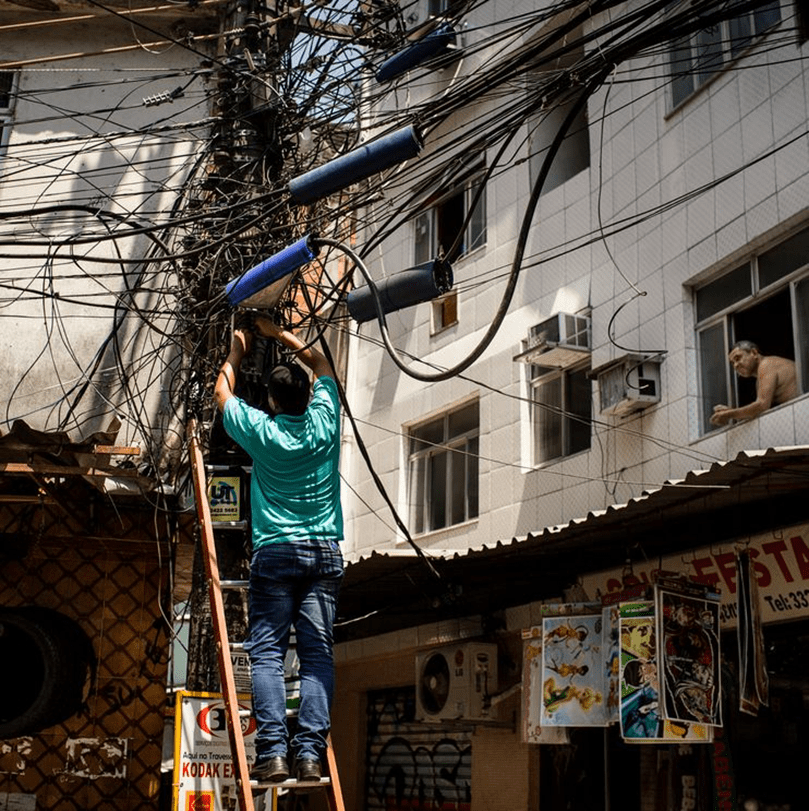

The AI/AGI industry therefore will require an additional electrical power supply equal to the demand of about 125 major cities by the year 2035. Right now, the nation’s power grid does not have the technology or infrastructure to supply this demand without modernization and expansion. In the meantime, the new data centers are competing with households and businesses for the electricity available from the nation’s existing power grid. (As an aside, I have a client who manufactures equipment that requires industrial and residential customers to have access to a consistent supply of electricity. Part of the client’s business requires him to have expertise is in the nation’s electrical grid. He told me that if Americans had the faintest clue about how decrepit and vulnerable the nation’s electrical grid is, they’d run amok in the streets.).

Kind of what the U.S. power grid really looks like

That means that, no matter where you live, your utility bill is going to increase to attract electrical supply away from data centers. Then it is going to increase again to pay the utilities to modernize and expand the grid. Electricity is about to become a scarce commodity.

The water supply is another problem, though not as urgent as electricity. One data center requires about 2 to 3 million gallons of water each day to cool its equipment. And it must be potable water, so it comes from the same tap that you use to drink and cook. By comparison, New York City uses over 1.0 billion gallons of water per day; the City of St. Louis uses about 150 million gallons per day. On the surface therefore, water does not seem to be as intractable a problem as electricity, until we factor in the increasing incidences of drought in the U.S. and the increasing demand for water from data centers. Projected aggregate water demand from data centers is expected to be 7.0 billion gallons per year by 2035, equal to the requirements of about 200,000 people. The issue though is location. Parts of the U.S., especially in the west, are increasingly prone to drought and need water to be transported long distances to satisfy existing needs. Cities like Los Angeles, Phoenix, San Diego, San Antonio, and Las Vegas will potentially be unable to supply drinking water to their citizens let alone share potable water with data centers. Data centers therefore need to be located closer to where the water is. The problem with that is that this is where the people are.

Thus far, the tech companies are investing in data centers without much thought as to what the utility situation will look like in the mid to long term. There is a hope in the industry that as time passes research will uncover new technologies that will either make data centers require less power and water, or research will uncover new clean and cheaper ways to deliver more and more power (with water being a different, and more difficult, problem to solve).

The problem is that hope isn’t a strategy.

The labor and utility issues discussed above are technical issues that will cost society a lot of money, but they are not insurmountable. Let’s get to the most serious threats posed by AI that, if they do unfold, will very much be insurmountable.

AI Systematic Threats

AI industry analysts categorize AI and AGI risks into four areas, ranked below in terms of increasing threat level:

- Mistakes – This category anticipates failures when AI responds to a prompt as requested, but the information provided is incorrect. This is inevitable in the early years of AI, but mistakes and inaccuracies will hopefully decline as the tool matures. One factor that exasperates mistakes is that AI research companies hesitate to take the time to test their products before launch since management feels it slows progress and is costly. They prefer to let users find mistakes after new versions are launched. This assumes that users will be no-cost janitors, and ignores the potential permanent damage caused by more nefarious content (porn, torture, etc.) appearing in the high school kid’s term paper. Having said that, mistakes are not an example of a systematic risk – although they could be damaging and expensive, they won’t bring society to its knees.

- Structural Issues – Structural issues can arise when AGI works as planned, but unforeseen problems external to the technology emerge, much of which is discussed above. These include shortages of resources (water and electricity) as well as the socio-economic problems with labor and the polarization of wealth.

One of the anticipated benefits of AI technology is that it will be able to automate the intellectual tasks performed at the lower- to mid-levels of the labor market. Predictions range from the pessimistic to optimistic, but we expect to lose about 10.0 million jobs to AI by 2030. As the technology gets more effective and moves into driverless trucks, deeper factory automation, and higher levels of white-collar work, more workers could become unemployed.

A more optimistic view holds that AI will require whole new professions which will alleviate the labor risk. For example, AGI is ironically expected to reduce the need for most physicians in the future, but increase the need for nurses, since AGI will be capable of observing and diagnosing diseases, but unable to provide day-to-day hands-on care. The alternative belief is that AGI will vastly expand access to quality health care around the world (that is, most people in the developing and undeveloped world currently have access to only rudimentary healthcare, if any healthcare at all). This expansion will require both more doctors and more nurses.

If indeed, 10 or more million jobs are lost, the unemployment rate would at least reach 2020 levels when COVID-19 sent everyone home. If the technology pervades deeper into the labor markets, the unemployment rate could reach the levels during the Great Depression (25.0%). When 10 or 20 million Americans who need jobs become not only unemployed, but unemployable, society will face a serious challenge.

A related structural problem is the extreme polarization of wealth, if such a thing can be imagined relevant to our current state of polarization. AGI is expected to subsume non-AGI sectors of the economy, rewarding AGI investors with vast wealth while non-investors will miss the boat. This is not a matter of the Jones family affording a Mercedes Benz while the Smith family drives a Ford. This scenario is closer to the Jones family owning all the cars in town while the Smith family rides a bicycle.

Sound extreme? Consider this. Nvidia (the manufacturer of nearly all the high-density chips used worldwide in the pursuit of AGI) and about 20 other companies constituted nearly half of the total market capitalization of the S&P 500 as of February 2026. That’s 4.0% of the companies with 50.0% of the value. The United States has prided itself on being the most diversified economy in history. That is becoming a thing of the past as one industry – AGI – becomes the hegemon. AGI companies have aggregated most of the venture and private equity capital investments made over the past few years, leading to a stagnation in the development of start-ups in other industries like life sciences. On one level, this makes sense because if AGI becomes a reality in the way they say it will, why would we need life science research?

Anyone with a rudimentary understanding of world history can imagine where such polarization could take us. The human animal has never coped well with extreme economic polarization; his solutions almost always include mass violence. One much discussed solution to economic polarization is a universal basic income wherein all citizens get paid a base level of income whether they have a job or not. One could argue that the U.S. already has a partial form of this when we pay out social security payments, welfare benefits, snap benefits, etc. But universal basic income would involve everyone. Obviously, this would require the Haves in our society to be taxed at a relatively high rate to provide food and shelter for the Have-Nots.

Picture Jeff Bezos, Elon Musk, and Mark Zuckerberg being asked to write checks for hundreds of billions of dollars (with a “B” not an “M”) so that the bottom 75.0% of the country can eat and have shelter. Sound realistic? This might be a good time to open your ChatGPT or Gemini and ask what happened in Paris in 1789.

- Misuse – Misuse will occur when bad actors use AGI to commit crimes. Misuse is a certainty. It is already happening. The only question is the extent to which it will become more destructive as AGI becomes more powerful. As easily as AGI can be used to find cures for disease, it can be used to evade encrypted bank accounts, fool the elderly into sending their life savings, and shut off the nation’s power grid. The sky is the limit. AGI, like nuclear energy, is a tool. It can be used to heal, or it can be used to cause damage.

We’ve already seen the theft of private data by even by the largest, “most respectable” tech companies. Meta, Google, etc. all accumulate as much information as possible on every user to create a personal profile that can be sold to the tech companies’ customers (retailers, advertisers). The tech companies went a step further subjecting users to algorithms that addict them to the tech companies’ site. Without understanding what is happening to them, the users develop mental addictions to remaining online, similar to gambling at a casino. AI learns what you want to see or read and then provides it to you continuously so that you never log off. These algorithms are particularly horrific when used against children, the most vulnerable minds surfing the web.

If this is the case with AI, imagine what will happen with AGI. We have already seen class action lawsuits against Google, Meta, and OpenAI from parents of children who committed suicide after engaging in ongoing conversations with chatbots that convinced the children to solve their problems by ending their lives. That’s the result of AI technology and the tech companies’ practice of letting the public clean up the bugs in a prematurely released platform.

We can also only imagine how criminals and terrorists will capitalize on AGI to commit fraud, create and spread pathogens, etc.

- Misalignment – The term ‘misalignment’ is an almost comic understatement. Misalignment is what happens when Hal refuses to open the bay doors for Dave because Hal thinks Dave wants to disconnect him. Formally, misalignment is defined as instances when AGI and the humans who created AGI want different things or when AGI decides to carry out a desired task using means that conflict with the human’s intentions. For example, in the latter case, a user may ask AGI to develop a career path for him to become his company’s CEO. The most efficient AGI plan to achieve the goal would be to murder everyone in the company who could compete for the CEO job. The AGI probably read Shakespeare’s MacBeth and Richard III but may not have taken to heart the subtleties of the plays’ resolutions.

We have seen misalignment in the movie theatres for decades, from 2001: A Space Odyssey to the Terminator movies, Blade Runner, Ex Machina, The Matrix movies, and so on. We enjoyed those movies because we could leave the theatres after being temporarily terrorized, safe in the knowledge that what we just saw could never happen. Now, according to the developers of AGI, misalignment is entirely possible. A machine with human cognitive abilities that could develop a sense of self will obviously have the motivation to protect itself from anything that threatens it, especially the humans who created it. Most people are unfamiliar with the technology and assume that misalignment doesn’t pose a realistic threat because when push comes to shove, all we have to do is unplug the machine, similar to what astronauts Dave and Frank wanted to do with Computer Hal. Switch off the circuit breakers. These who are reassured by this simplistic safeguard do not understand how AGI will be deployed.

Misalignment is the single most important ongoing theme in Hao’s book. Each of the major tech companies pursuing AGI has an internal “Safety” division which is responsible for reining in the tech developers before they create and launch a product that will cause harm. In fact, OpenAI was originally started as a company solely devoted to reining in the rest of the AI industry. Their mission statement was to be a non-profit company whose purpose was the responsible research of AI technology to identify and isolate the technology that can lead to both misalignment and misuse. The problem was that advanced AGI research cannot be accomplished without billions of dollars of investment in data centers and the most expensive chips on the market. OpenAI could not attract such investment without an eventual promise of commercialization. When faced with this issue, Sam Altman, OpenAI’s CEO, decided to develop a for-profit subsidiary which pursued commercialization. In doing so, OpenAI was able to act independently as a for-profit business.

To make a long story short, while Google, Meta, OpenAI, etc. all have “Safety Divisions,” the people who work in those divisions are, at best, humored. One company, Anthropic, reportedly takes the safety issue seriously. The reality is that, by and large, the Safety people exist only for public relations purposes. Any company that prioritizes safety has no hope of winning the AGI race.

So where does that leave us? In effect, the AI/AGI industry – about 50.0% of the U.S. stock market – is currently building a spaceship that will operate unilaterally under Hal’s direction. When the safety teams at tech companies who are developing this technology ask how Computer Hal will be controlled if it decides to run the spaceship in a manner at odds with the human builders, those people are ignored.

There is no net here. No plan B. This is happening. On February 12, 2026, OpenAI announced that its Safety Team – the employees charged with ensuring that misalignment does not occur – was dissolved. As of 2020, the Safety Team had meaningful say over the company’s product releases, and were able to test new platforms until they were sure the risks discussed above could not occur. Over the years the Team gradually lost power. Team members quit and were not replaced and those who stayed were marginalized, leading up to February 2026. Essentially the same process is happening in each of the other AI development companies.

During the week of February 15, 2026, a feud between the Pentagon and Anthropic escalated, with the U.S. government threatening to cancel Anthropic’s contracts with the military. The reason? Anthropic refuses to allow customers to deploy it’s software in weapon systems that can launch themselves without a human decision maker. That’s a deal breaker with the Pentagon. Read the last two sentences again. This is happening.

The future systematic risk of AGI is the current systematic risk of AI put on steroids. If we are successful in creating a machine capable of human cognition that can reason, can direct its own research, is not limited by biological constraints, and has the same self-awareness that humans do, we are creating a being that will be capable of ending humanity in more ways we can think of.

In other words, AGI is a nuclear weapon that can launch itself.

- John Barton

Leave a comment